Creating access filters in live networks

Every vendor or consultant presents fantastic solutions, that would work in ideal environments they think every customer has.The truth is, only the newly designed networks are like that, while the rest is more heterogeneous with many exceptions and special fringe cases.

And as it is more common to have network engineers, who don't speak to the application people, lack of know-how about the traffic flows in their networks has serious implications.

With inexperienced architects or engineers, this usually ends up with even more non-standard environment or in worst case with dysfunctional network.

Another issue is the necessity to do network re-design in order to re-route traffic via filtering devices, which often can cause downtime or outages when implementing such solution.

So in this post, I'll share a technique of creating access lists for network separation without causing major interruptions or needing downtime for these changes.

There are many aspects (like performance, location, support), that are outside of the scope for this post, but have to be considered before using filtering in production networks.

The examples below are based on the idea of a L3 Cisco network with several internal VLANs (where the ACLs would be applied), but the method works for L2 networks (applying ACLs on ports or uplinks) as well.

Phase 1: Observation

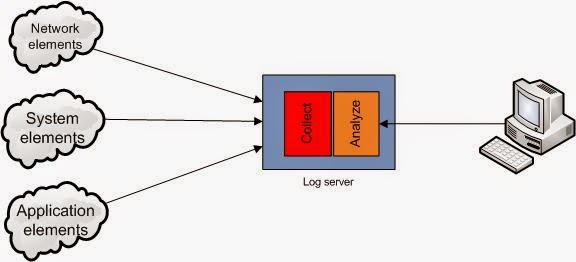

As primary problem of most network engineers is not knowing what packets flow through their network, first step has to be to find that out.And this is accomplished with creating an access list, that would allow any traffic through and log the hits.

ip access-list extended ThisVLAN100

1000 permit ip any any log

To prevent overload of the log server, it's better to specify known traffic flows already into the ACL. Good example is DNS traffic, that is very common in server as well as in user networks with high probability of occurrence.

Phase 2: Adaptation

In highly populated networks, there would be loads of traffic and logs would be growing faster than a human can read them. So the goal of this phase is to minimize the log growth by inserting ACL entries with most common traffic patterns:

900 permit ip host <some host> anyThis phase can have many iterations, where ACL entries can be adjusted to be more generic or specific (depending on security level that is acceptable).

Time spent in this phase also depends on the probability that all devices would perform all the traffic patterns (e.g. monthly backup or data upload)

Phase 3: Conclusion

With ACL populated with identified traffic flows, that are acceptable/expected, the finalization is to change theno 1000 1000 deny ip any any logIt is always advisable to log the last entry, as when some application changes in the network, this would provide good source of information for troubleshooting.

If Adaptation phase was successful, there should be almost no hits to this rule.

Maybe I should mention, that in internet-facing networks this last rule would be looking a bit differently and there would be other rules for logging anomalous traffic:

999 deny ip <other internal networks> <protected network> log 1000 permit ip <protected network> anyBut I hope you got the picture how the process works to establish ACL filters in production networks without major impact.

Next steps

Depending on the network architecture, this process can be automated either by monitoring the logs and generating changes to be applied or by using "canary deployment" and replicating the result to the rest of the network.The knowledge gathered in phase 1 and 2 should also help the network team to understand the traffic patterns better for future design and improvement projects.